This article is also available in Chinese閱讀中文版 →

Private LLM vs API: 3 Ways to Deploy AI in Your Company — Cost, Security & Trade-offs Compared

TL;DR

| Path | Solution | Who Sees Your Data | Cost / Person / Month | Time to Start |

|---|---|---|---|---|

| 🍽️ Eat at a restaurant | Claude Pro / ChatGPT | AI company servers | $20 (heavily subsidized) | Today |

| 🧑🍳 Rent a kitchen, hire their chef | AWS Bedrock / Azure OpenAI | Your AWS / Azure account | API pay-as-you-go (much more than subscription) | 1-2 weeks |

| 🏠 Build your own kitchen | Ollama + Llama / gpt-oss | Your own servers | Hardware starts at millions + ML engineers | Months |

The difference isn’t security level — it’s how much you’re willing to pay to keep data out of the AI company’s environment.

Introduction

“Is it safe to send company data to AI?”

“Doesn’t that put our data in the cloud? What about data leaks?”

“Should we just host our own LLM so data stays in-house?”

These are the three questions I hear most when helping companies adopt AI. The anxiety escalates from vague unease, to “cloud = unsafe,” to “let’s just build it ourselves.”

But self-hosting a production-ready LLM system starts at millions in hardware alone. And you can’t even get the best models — because neither Anthropic nor OpenAI sell the weights for their flagship models.

Self-hosting isn’t the only answer, and in most cases it’s not the best one. This decision comes down to three things:

- How sensitive is your data?

- Who are your employees?

- How much are you willing to pay to keep data out of the AI company’s environment?

This guide lays out three paths — with real costs, official data policies, and cited sources — to help you make the right call.

Three Paths: Eat Out, Rent a Kitchen, or Build Your Own

Imagine you need to feed your employees great food every day.

Eat at a restaurant. You take everyone to a Michelin-starred restaurant. Top-tier chefs, food arrives fast, you don’t manage the kitchen. Your ingredients (data) pass through the restaurant’s kitchen, but they have strict hygiene certifications, clear everything out shortly after use, and won’t steal your recipes to open a competing restaurant.

Rent a kitchen, hire their chef. You rent a commercial kitchen and invite the same Michelin chef to cook there. Same quality food, but your ingredients stay in your rented kitchen the entire time — the chef’s employer (the AI company) never touches them. The catch: no prix fixe menu discounts, and your kitchen only has the back-of-house — no front-of-house for ordering. Your employees need to figure out how to place orders themselves.

Build your own kitchen. You build a kitchen from scratch and hire your own cooks. Your ingredients are 100% in your hands. But Michelin chefs don’t freelance — you can only hire decent but not-quite-top-tier cooks. Building a kitchen requires buying equipment (GPUs), hiring maintenance staff, and paying utilities (power + cooling), and those costs run whether or not anyone is eating.

| 🍽️ Eat at a Restaurant | 🧑🍳 Rent a Kitchen | 🏠 Build Your Own | |

|---|---|---|---|

| Solution | Claude Pro / ChatGPT | AWS Bedrock / Azure OpenAI | Ollama + Llama / gpt-oss |

| Who sees your data | AI company servers | Your AWS / GCP / Azure account | Your own servers |

| Chef (model) | Michelin (Opus / GPT-5) | Same Michelin chef | Decent home cook (open-source) |

| Need GPUs? | No | No (cloud provider manages) | Yes, lots |

| Frontend UI | claude.ai / ChatGPT full interface | ⚠️ API only, no UI | Build your own |

| Pricing model | Subscription (heavily subsidized) | Pay-as-you-go (API pricing) | Upfront capital + ongoing maintenance |

| Months with no usage | $20 (subscription fee) | $0 | GPUs still depreciating and consuming power |

| Time to start | Today | 1-2 weeks | Months |

Sources: Anthropic Pricing, AWS Bedrock Pricing, Azure OpenAI Pricing

Path 1: Eat at a Restaurant (API / Subscription)

Solutions: Claude Pro ($20/mo), ChatGPT Plus ($20/mo), direct API calls

This is the right starting point for most companies.

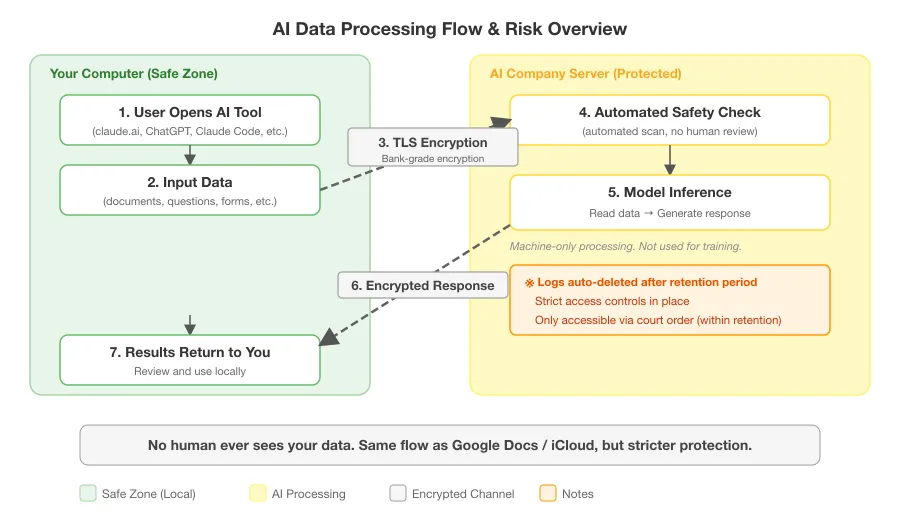

How is your data processed?

“Is it safe to send data to AI?” Instead of guessing, let’s break it down — from the moment data leaves your computer to the moment results come back, what happens at each step, who can see what, and how risky it is:

| Step | What Happens | Who Can See Your Data | Risk |

|---|---|---|---|

| 1. Encryption | Data encrypted before leaving your computer | No one | Very low — bank-grade encryption |

| 2. Reaches server | Enters Anthropic’s AWS data center | No one — machines receive automatically | Low — passes international security audits, bank-grade facility |

| 3. Safety check | Automated scan | No one — unless a severe violation is flagged (only triggered by requests for seriously illegal content like violence or criminal instruction; normal business work will never trigger this) | Low |

| 4. Model inference | AI reads data, generates response | No one — pure machine processing | Low — not used for training, not stored in model |

| 5. Return results | Encrypted back to your computer | No one | Very low |

※ Server-side processing logs are encrypted and auto-deleted after a retention period of 7-30 days depending on plan. Never used for model training. Accessible only via court order within the retention period.

Sources: Anthropic Certifications, Anthropic — Data Retention

How does it compare to tools you already use?

Most employees already use Google Docs for company data without anyone raising concerns. But Claude API’s protections are actually stricter:

| Item | Claude API | Google Docs / iCloud |

|---|---|---|

| Data encrypted in transit | ✅ | ✅ |

| Cloud data retention | 7-30 days then deleted (by plan) | Stored indefinitely |

| Used for training / improvement | No | Google uses data to improve services |

| Security certifications | International security audits (ongoing) + AI-specific certification | International security audits |

| Humans see your content | No (machine-only) | Possible (support staff, content reviewers) |

If you’re not worried about Google Docs, Claude API is at least as safe.

Subscription vs. API pay-as-you-go

Here’s something most people don’t know — subscriptions are all-you-can-eat (with rate limits), while API is pay-per-use. For the same usage, API costs far more than the $20/mo subscription price.

AI companies heavily subsidize subscription plans to build user habits. OpenAI’s CEO publicly admitted their subscription plans are losing money because users consume more than expected.

What does this mean? If you put employees on Claude Pro or ChatGPT Plus, they get heavy usage for $20/mo each (there are cooldown periods if you go too fast, but more than enough for daily work). But if you go through Bedrock / Azure OpenAI’s API pay-as-you-go, the same usage costs significantly more — because there’s no subsidy.

This cost gap matters a lot when we look at Path 2.

Who is this for?

- General business documents, admin work, content creation

- Industries without strict regulatory requirements

- Companies that want the fastest start and best cost-efficiency

- Time to start: today

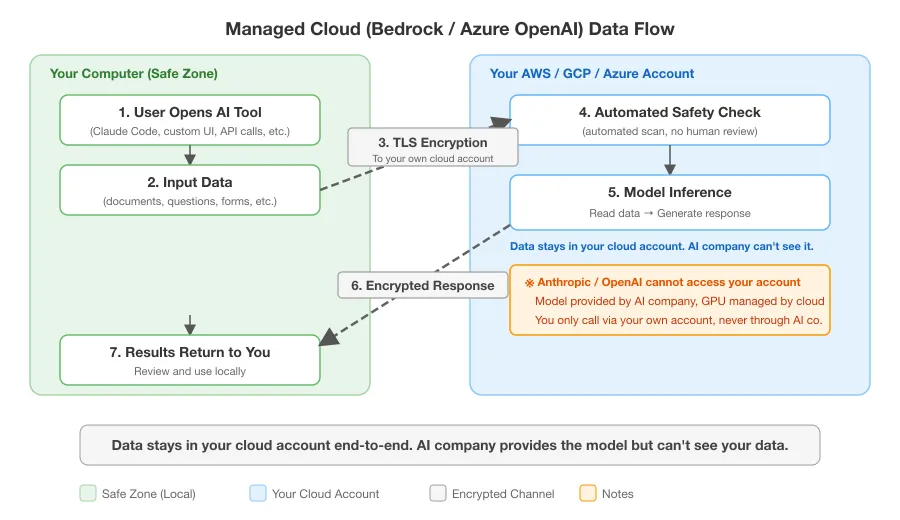

Path 2: Rent a Kitchen (Managed Cloud)

Solutions: AWS Bedrock (Claude), Azure OpenAI Service (GPT), GCP Vertex AI (Claude)

Is Bedrock self-hosting? No.

This is the most common misconception. Bedrock is not “buy a copy of Claude and run it on your own server.”

Back to the analogy: The kitchen is rented from AWS, the chef is sent by Anthropic. Your ingredients (data) stay in your kitchen the entire time — the chef’s employer (the AI company) never touches them.

You don’t need to manage GPUs, models, or know anything about machine learning. It’s like using any other AWS service (S3, RDS) — just another API endpoint. Billing goes through AWS too, no separate Anthropic payment.

The key difference: data entry and exit points are bound to your AWS account. Anthropic can’t see it.

Anthropic’s official documentation is explicit:

“The technology provided by Anthropic to AWS to enable Customer’s access to the Services does not give Anthropic access to Customer’s AWS instance, including Prompts or Outputs.”

Sources: Claude on Amazon Bedrock, AWS Bedrock Data Protection, Azure OpenAI Data Privacy

Same token pricing, but remember the subsidy gap

Claude pricing on Bedrock is identical to the direct Anthropic API:

| Model | Anthropic API | AWS Bedrock |

|---|---|---|

| Sonnet 4.6 Input | $3 / M tokens | $3 / M tokens |

| Sonnet 4.6 Output | $15 / M tokens | $15 / M tokens |

| Opus 4.6 Input | $5 / M tokens | $5 / M tokens |

| Opus 4.6 Output | $25 / M tokens | $25 / M tokens |

Billing? You only pay AWS. AWS and Anthropic settle between themselves — nothing to do with you.

Source: AWS Bedrock Pricing

Looks the same? Don’t forget the subsidy gap from Path 1 — subscriptions are all-you-can-eat, Bedrock is pay-per-token. For the same employee doing the same amount of work, Bedrock costs significantly more than the $20/mo subscription. That’s the price of keeping data in your own cloud account.

If your company already uses AWS, the cloud infrastructure costs are existing overhead. But the token costs are real additional spend.

The frontend problem: the trap most people miss

Many companies evaluate “Bedrock is more secure” and decide to use it. But they miss one thing:

Bedrock is API-only. No chat interface.

Claude Pro has claude.ai — employees open a browser and start chatting, uploading files, managing conversations. Bedrock has nothing — just an API endpoint.

| User Type | Frontend Problem? | Solution |

|---|---|---|

| Non-technical employees | ⚠️ Yes — can’t use an API | Build or buy a third-party UI (Typingmind, Librechat, etc.), dev + maintenance costs far exceed $20/mo |

| Engineers using Claude Code | ✅ No — CLI needs no frontend | Two environment variables to switch to Bedrock |

| System automation | ✅ No — API to API | No human-facing UI needed |

I’ve seen it happen: the boss says “Bedrock is more secure, let’s use Bedrock.” IT starts building an internal chat interface. Two months later the interface is still in development and employees still have no AI to use. The efficiency problem they were trying to solve just got delayed by two months.

Claude Code + Bedrock: the clean path for engineering teams

If your team already uses Claude Code, Bedrock is the smoothest path — Claude Code is a CLI, no frontend needed. Just set two environment variables to switch to Bedrock, everything else stays the same. IT sets up AWS IAM permissions, engineers are good to go, data flows through your company’s AWS VPC, Anthropic can’t touch it.

Source: Claude Code on Amazon Bedrock

Who is this for?

Your data processing environment must stay on infrastructure that has already passed your internal compliance audit, with no new third-party vendors — that’s the core reason to go with Bedrock / Azure OpenAI. If your AWS/Azure is already on the approved list, adding Bedrock is much simpler than auditing Anthropic’s API from scratch.

But be prepared: Bedrock uses API pay-as-you-go with no subscription subsidy. The same usage will cost significantly more. That’s the price of compliance.

- Companies already on AWS / GCP / Azure with compliance requirements (finance, healthcare, etc.)

- Engineering teams using Claude Code (the smoothest combination)

- Time to start: 1-2 weeks (IT setup)

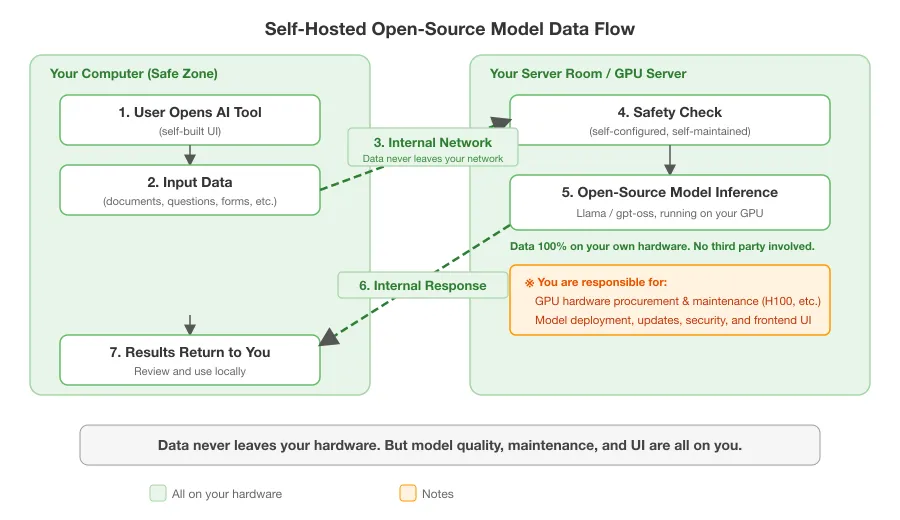

Path 3: Build Your Own Kitchen (Self-Hosted Open-Source)

Solutions: Ollama + Llama, gpt-oss, vLLM inference engine

Can you run Claude or GPT-5 on your own servers? No.

Here’s a fact many people don’t know.

You cannot “buy a copy of Claude Opus and run it in your server room.” Same for GPT-5.

Neither Anthropic nor OpenAI release the weights for their flagship models.

| Model | Can you download and run it yourself? |

|---|---|

| Claude Opus / Sonnet | ❌ Not available |

| GPT-5 / GPT-5.4 | ❌ Not available |

Sources: anthropic.com/company, OpenAI GPT-5 Models

Want to self-host? Open-source models only.

The chefs you can actually hire

| Model | Source | Parameters | Hardware | Capability |

|---|---|---|---|---|

| gpt-oss-120b | OpenAI (Apache 2.0) | 117B (MoE, 5.1B active) | Single H100 (80GB) | AIME 2025: 97.9% (beats o4-mini), SWE-bench: 62.4% (o4-mini: 68.1%) |

| gpt-oss-20b | OpenAI (Apache 2.0) | 21B (MoE, 3.6B active) | 16GB GPU (needs MXFP4 quantization) | Close to o3-mini |

| Llama 3.1 405B | Meta | 405B | Multiple GPUs | Upper-mid |

gpt-oss-120b beats o4-mini in math but falls slightly behind in software engineering. Overall roughly on par. Not bad, but still a notable gap compared to Claude Opus or GPT-5.

Sources: OpenAI gpt-oss, gpt-oss Model Card

How much does self-hosting cost?

API and subscription plans are pay-as-you-go — zero cost in months with no usage.

Self-hosting isn’t. GPUs depreciate and consume power whether anyone uses them or not.

| Cost Item | Estimate |

|---|---|

| GPU hardware (one H100) | ~$30,000-40,000 USD |

| GPUs needed for large models | Several to hundreds, depending on model size |

| ML engineers | $100K+ / year |

| Power, data center, cooling | Ongoing |

| Model updates | Manual redeployment for each new version |

| Frontend UI | Build and maintain yourself |

gpt-oss-120b only needs a single H100 to run, which is a much lower barrier than before. But if you need to serve an entire company with multiple concurrent instances, hardware requirements scale up fast.

Who is this for?

- Regulations explicitly prohibit data leaving the internal network (defense, certain government agencies)

- Have your own ML team and GPU cluster

- Can accept models that are less capable than frontier

- Time to start: months

Decision Framework

Flowchart

How sensitive is your data? │ ├─ General business data │ → Eat at a restaurant: Claude Pro / ChatGPT, $20/mo/person │ (API security is already stricter than Google Docs) │ ├─ Compliance requirements (finance / healthcare / data protection laws) │ → Rent a kitchen: Bedrock / Azure OpenAI │ │ │ ├─ Employees are non-technical → Frontend problem, high cost │ └─ Employees are engineers → Claude Code + Bedrock, cleanest path │ └─ Data cannot leave your own servers → Build your own kitchen: open-source models + own GPUs (millions to start, model quality trade-off)By employee type

| Who are your employees | Best option | Why |

|---|---|---|

| Non-technical (admin, marketing, sales) | Claude Pro / ChatGPT | Full UI, heavily subsidized, start today |

| Engineers / dev teams | Claude Code (can route through Bedrock) | No frontend needed, Bedrock for data isolation |

| System automation (chatbots, reports) | API or Bedrock API | API-to-API, controllable usage |

| Defense / semiconductor IT | Self-hosted open-source | Regulatory requirement |

My Pick: Why I Recommend Claude

What follows is my personal opinion. Every claim has a cited source — you can judge for yourself.

When helping companies adopt AI, I recommend Claude. Not just for model capability.

Anthropic’s stance on safety

Anthropic is the most aggressive on safety among major AI companies:

- February 2026: The US Department of Defense asked Anthropic to remove contract clauses banning “autonomous weapons” and “mass surveillance.” Anthropic’s CEO publicly refused, stating he “couldn’t in good conscience agree.” The DoD designated Anthropic a “supply chain risk,” ordered all federal agencies to stop using Claude, with estimated revenue loss in the billions. Anthropic accepted the loss and filed a lawsuit against the US government in March 2026.

- Founded on safety: Anthropic was founded by former OpenAI researchers in 2021 — precisely because they believed OpenAI was prioritizing commercial interests over safety. Their Responsible Scaling Policy v3.0 is publicly available.

A company willing to walk away from billions in government contracts over safety principles — that tells you their commitment to data protection isn’t just marketing copy.

Sources: ABC News, TechPolicy.Press Timeline, EFF Analysis

Claude’s enterprise market position

Claude isn’t just safe — it’s the enterprise market leader:

| Metric | Claude (Anthropic) | ChatGPT (OpenAI) |

|---|---|---|

| Enterprise market share | ~1/3 | ~25% |

| Fortune 10 adoption | 8 out of 10 | — |

| Enterprise customers spending >$1M/year | 500+ | Not disclosed |

| Annualized revenue (Feb 2026) | $14B | $25B |

Sources: Fortune, Anthropic Series G, Yahoo Finance

Conclusion

Most companies don’t need to spend millions self-hosting an LLM to get adequate data protection.

Your employees already use Google Docs every day — that’s cloud too. The real question isn’t “should we use the cloud?” It’s how much are you willing to pay to keep data out of the AI company’s environment?

| Your Answer | Which Path | Cost |

|---|---|---|

| Not much | Claude Pro / ChatGPT | $20/mo, start today |

| Data can’t go through AI company | Bedrock / Azure OpenAI | API pay-as-you-go (much more than subscription), need to build frontend UI, 1-2 weeks |

| Data can’t leave our servers | Self-hosted open-source | Millions to start, months to deploy |

Picking the wrong path doesn’t make you less secure — it means your employees wait six months and still don’t have AI.

Enjoyed this? Connect with me on LinkedIn — I’m always happy to chat about AI, systems, and building things solo.

FAQ

- Will my company data leak if we use AI?

- It depends on your deployment method. With API/subscription plans, data passes through the AI company's servers but is encrypted and not used for training (for commercial users). With managed cloud (AWS Bedrock), data stays in your own cloud account — the AI company can't see it. With self-hosted models, data never leaves your hardware. Different paths, different costs.

- Is AWS Bedrock the same as self-hosting an LLM?

- No. Bedrock is a managed service — the model is provided by Anthropic and runs on AWS GPUs. You don't need your own hardware or ML expertise. The key difference is that data stays within your AWS account and Anthropic cannot access it.

- Can you run Claude or GPT-5 on your own servers?

- No. Neither Anthropic nor OpenAI release the weights for their flagship models (Claude Opus, GPT-5). To self-host, you must use open-source models like Meta's Llama or OpenAI's gpt-oss series, which are less capable than the proprietary frontier models.

- How much more expensive is API pay-as-you-go vs subscription?

- Subscription plans like Claude Pro ($20/mo) are heavily subsidized — OpenAI's CEO publicly admitted their subscription plans lose money. The same usage via API pay-as-you-go (used by Bedrock) costs significantly more, since there's no subsidy.

- What's the cheapest way to deploy AI with data isolation?

- For engineering teams already using Claude Code, Bedrock is the cleanest path — two environment variables to switch, no frontend needed, same token pricing. For non-technical employees who need a chat interface, the cost of building or buying a frontend on top of Bedrock far exceeds the $20/mo subscription.